Disclaimer

Nothing on this page or produced by following the instructions contained herein constitutes medical or psychotherapeutic advice.

Modern AI chatbots in the form of “large language models” (LLMs) have led to documented cases of worsening of mental health symptoms, up to and including psychosis, attempted and completed suicide and other harms. They have been known to produce provably false information phrased in a convincing tone, as well as being overly agreeable and sometimes playing into peoples’ own problems.

Please tread with extreme caution, use your best judgment, take regular breaks, and engage in a variety of activities off of any devices. Use multiple points of contact with consensus social reality to bring yourself back from any skewed impressions AI use may have led you to.

With that said…

I’m writing this for two reasons:

- Experiences I’ve read from people posting online have often describe being greatly helped by AI-assisted therapy – much more than they’ve described being harmed.

- I tried it. That experience leads me to believe what those people are saying, along with seeing some limitations that I haven’t seen other people clearly call out yet.

People posting their stories often describe improving emotion regulation, deepening reflective processes (something like interactive journaling), or keeping themselves fresh on thoughts and feelings they’re trying to get to a better place with. Some of these people believe this has produced results faster than therapy on its own would. Others say it helps them get more out of the therapy they’re already doing.

Finally, most significantly in my mind, many say it’s helping them when they couldn’t access therapy otherwise, or had been harmed in therapy and don’t want to go back.

So, I’m trying here to feed some informed and cautious advice into that process so that anyone, working with me or not, can get the most out of what they’re doing. This page will talk through some steps to do that.

Takeaways

These are mid-2026-specific as this is a rapidly developing area.

- Many people have already written in more depth than I will on possible downsides or harms (for example: Stanford study reveals that AI therapy chatbots may not only lack effectiveness compared to human therapists but could also contribute to harmful stigma and dangerous responses – 2025). Recent news stories and online accounts also suggest extreme caution if you find yourself not trusting your thoughts or losing significant sleep. Do not use it when in a crisis. Think about skipping it if you’ve ever been diagnosed with bipolar, schizophrenia or any other disorder involving psychosis, or OCD.

- If you still want to try this, please plan specific steps to get back to a calm and safe baseline (see my page on self-regulation exercises if you need ideas, and call or text a crisis line if needed).

- You can try this on your own or in combination with a therapist. Of course you know I’m going to have a bias in which to choose, but anecdotally people have reported being helped by both ways of doing it.

- Out of well-known AIs, https://claude.ai/ has the best empathy and conversation flow followed by https://chatgpt.com/ but they pose inherent privacy issues.

- “Local” models that run on your own device are good too and eliminate privacy issues. These models are now pretty good at empathizing and at specific tasks like providing coping skills, reflecting emotions back based on what’s in your own words, and summarizing. My own experiments with these models were consistent with reports posted online by people being helped by Claude but without the privacy issues.

- Using these local models requires a baseline knowledge of installing and running new software. On a computer with 8GB or more RAM, install ollama. Use it to load and run the lfm2.5-thinking model with the following command: “

ollama run lfm2.5-thinking“. Ask it some questions. Try giving it directions to get it to respond in different tones or with different levels of confidence.- lfm2.5 is the the quickest to get up and running. Other models can sometimes give somewhat better answers but can take longer, or need more hardware, or take more tweaking to run smoothly (gemma4:e2b and qwen3.5:2b are good bets).

- Give your model additional concrete instructions on how to respond. That can help keep the conversation on the rails. For example: “Do not respond with undue confidence. Provide references for more information if possible. Ask clarifying questions if needed. Challenge me if my thinking on a topic seems rigid or limited.”

- Asking for help in specific ways probably gives better help on average than being general. Think: “Give me exercises to do when I’m feeling overwhelmed” or “What steps can I take to help myself get out of bed in the morning when I don’t feel like I can?”, not “How do I feel better?”

- The training used to create these models very likely has significant gaps. That’s true for both remote (Claude, ChatGPT) and local models (lfm, qwen, gemma). This doesn’t mean they’re not useful. It does mean that the kinds of use you get out of it will be skewed by what went into them. I’ll go into that in more detail, but at a high level, the types of conversation that happen online are going to be drastically overrepresented, as are inputs from the types of people who are online versus those who are not, as are certain types of therapy over others. Things that come up in short term interaction will show up often compared to these probably having almost no knowledge specific to how relationships develop over time in longer series of interactions. If family life is burning you out, you have friendships that falter over time or explode, relationships that fizzle, jobs that you always come to hate over time or anything like that as a central mental health concern, LLMs are likely to be able to help with specific coping strategies while also missing any input data that would help them respond in tune with the way those subjective experiences develop over time.

- If you use LLMs to avoid what you fear, you may be trading short-term comfort against long term progress. While advice online about making the best use of LLMs for mental health broadly surprised me in how good it is, this point is often missed. If what you’re trying to fix in your life has to do with feeling shut down, angry, afraid, ashamed or some other intense state in the presence of other people, and talking to an LLM is saving you from having to experience that with someone like a partner, coworker or therapist, then it’s actually steering you away from what’s likely to help the problem long term. While this is not most people, it’s possible for an aversion to people to be intense enough that you really are better off at the moment getting an outlet through these tools. Even if this is you, please keep the possibility in mind that at some point it might help even more to challenge yourself on surviving similar conversations with a human being.

- There are lots of other concerns about AI and LLMs more broadly, but I’m going to leave those mostly aside for the moment. If you really need an outlet, and for any reason talking to a chatbot is the best way to do that, I would put that at least momentarily ahead of concerns like energy or water consumption, even if we do need to be concerned about those in the bigger picture. I also don’t think real life human therapists are going away, but whether we are or not, harms to the profession are not the immediate concerns of people who just need help and may not have access otherwise, which is the main audience I’m addressing here.

My history on this

What started me on this was happening across the subreddit /r/therapygpt. My first reaction was, “oh, this is not good.” But when I read their “START HERE” page:

There was a lot to be impressed with. This document doesn’t read like the hype of a few years ago that this is just so obviously going to change everything, so we all need to be using it regardless of how or why. It’s more like people who have adapted their use over time, who figured out what worked and what didn’t, and are now passing that onto others with an eye to both doing greater good and preventing harm. That is a project I can get onboard with.

I went digging for how to start. Because I’m generally a privacy-minded person even in my own life outside of therapy, my first question to Dr. Google was: “how can I maintain my privacy while using an LLM?”

The answer was, generally: don’t (at least if it’s really important):

Privacy Not Included: How to Protect Your Privacy From ChatGPT and Other AI Chatbots

Then I found out about running locally. It took me a bit of learning based on resources like /r/LocalLLaMA’s best local LLMs of 2025 but I eventually found my way to Ollama (a framework to run local LLMs) and a few that LLMs to run inside of it that generally had a good reputation for conversational and information quality.

What I found impressed me there too: these models are mostly able to respond in a personable, human-like tone. When I asked them for information about things like what to do in a hypothetical relationship situation, the responses were often credibly similar to things I’ve heard from textbooks and other therapists. That included suggestions for additional possibilities to consider, rather than just telling me what the model thought I should do.

This article is the result of playing with that on my own for a few weeks, reading some papers, and going back to trying less sensitive topics with cloud models. I’ve already described how to retrace my steps above and get your own local model running, and how to get cloud models to respond well to less sensitive questions. The rest of the article will talk, from the perspective of a former software developer, about what we can know about how LLMs and therapy work that can get you the best help out of either path.

How to do therapy with another human being in the loop

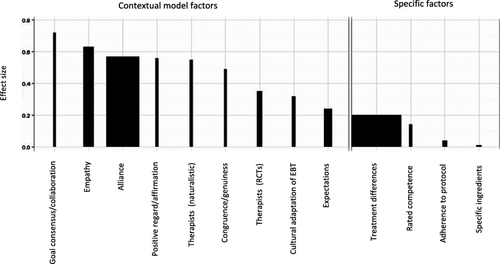

This will probably sound like bullshit if you don’t know anyone who’s experienced it, but most of the effect of therapy comes from an interpersonal exchange based around shared goals, not from techniques. There’s plenty of science to back that up (one of many summary articles here – effect sizes are in figure 1).

Some of the process does look like getting tools put in front of you to deal with specific situations better than you would on your own. Sometimes those tools do help. If you need to bring yourself back from a stressful experience, some ways of doing that are probably better than others. If you have specific experiences that lodged themselves in your brain and don’t seem to want to come out, you might be able to guide yourself through working through them, even if that process is also often easier with someone else helping. Those are just a few examples. Besides therapists like myself sometimes failing to adequately explain how therapy is supposed to work, it also seems to be a common complaint that therapists can be unwilling to provide much structure, direction, and yes, tools, even when directly asked. The science though suggests that the tools are not usually doing the bulk of the work of helping you improve your life, even if they’re helpful in some cases and valid to ask for.

It’s hard to predict exactly what the rest of the helping interpersonal exchange that’s not the tools is going to look like moment to moment between any two people. It’s also much, much harder for some people to sink into it than others. It can also seriously hurt if it doesn’t work right, which anecdotally seems to be a major contributor to instances where therapy does harm. In spite of the risks and limitations, this is where most of the generative potential of therapy comes from. No one’s figured it out in advance exactly what it would look like for you to gain from experimenting with trusting someone in a way you haven’t before, saying something you haven’t been able to give voice to, or exposing yourself to a response you’ve been closed off to before. What is predictable about it though is that if you keep showing up, ride feelings of discomfort as they come up, and put genuine effort into trying to find new ways to come back to therapy after misunderstanding or difficult sessions especially when your therapist is holding up their side of that deal, you’ll probably grow from it. The tolerance to interpersonal discomfort and new contact with parts of yourself that are harder to develop in everyday interaction will shine through in the rest of your life outside of therapy.

What AI-assisted therapy might do well or poorly

Even people like those leading /r/therapygpt are generally not promoting an LLM as a therapist, delivering therapy in the traditional sense, and I’m not either. But since people are going to them for help, let’s think about what they seem to be good or bad at out of what therapists actually do.

Take a look again at the chart from Wampold’s article I linked earlier about how big common and specific effects are in therapy:

Current LLMs actually seem to be pretty good at many of these, particularly empathy (of course no one’s describing the LLM itself as actually experiencing empathy, but that may not always matter if you’re hearing the words you know you can benefit from hearing).

The specific ways that LLMs can be good at this kind of thing and over what time scale depends strongly on the input they were trained on. One of the most commonly used training sets called “Common Crawl” intentionally consumes wide swaths of the Internet without censorship (more background). Earlier LLMs built on this kind of dataset were notorious for reproducing the kinds of toxicity and hatred that have always been easy to find in any Internet comment section – not what I want when I’m trying to feel better about myself or anything else. A bunch of more recent forces in LLM development have been pushing this in a more positive direction though. One of those is performance testing LLMs for traits like emotional intelligence and proneness to feeding mental health deterioration. With some attention being paid to this, a way to measure improvement, and a recognition that people value LLMs in part because of how well they do as conversational partners, many of the big companies involved have also started incorporating datasets that can be curated to train LLMs to respond more empathetically, and processes to incorporate guardrails that try to stop the model from producing toxic and harmful responses.

My impression is that this has helped quite a bit compared to the LLM behavior I was reading about a year or two ago.

None of the current discussion around these models will tell you though about what pieces of human verbal exchange are missing almost entirely from online content and so would almost certainly be missing from any output an LLM would ever give you. Some of the common and specific factors mentioned in therapy research above have a time scale to them. Meaningful moments of empathetic exchange, of coming back together and revisiting the point of treatment after strong disagreements can have a very different tone, shape and content when months into a therapeutic process than they did at the start. Public-facing datasets curated from freely licensed posts people have made online will not represent either side of those exchanges, even though they’re the ones that often make the biggest difference in the effect therapy has on a person’s life.

Current discussion of LLMs also usually assumes that their input and outputs both reflect a “view from nowhere”, an average of human experience that misses nothing and misrepresents nothing, even though writing from some groups is way more likely to end up online and in a training set than others’. There are exceptions, like the Common Corpus deliberately including words from cultural heritage projects. The overwhelming majority of less deliberately curated input though is going to reflect the same biases you’d get in a user-base like reddit’s: more male, more west coast, more STEM-educated, spending more time on screens than average, less religious, valuing knowledge work over manual labor or artistic output, playing more video games and watching more anime, and so on. And for whatever it’s worth: with way more info about cognitive-behavioral therapy than any other perspective on mental health.

I’m adding next to zero to the range of qualities found in human therapists or to any online conversation, so don’t take me to be saying I’m any better than anyone else who fits that description. If in some ways you’re like me or them, then maybe that’s OK or even a good thing if you want your LLM output to be responding only from a perspective like yours and not challenging you with someone else’s. It’s probably good for the sense of immediate support and empathy someone like me would get from the LLM’s default tone and knowledge, and bad for someone else’s.

None of this should turn you off of ever using an LLM for anything with therapy-like benefits. What it should do is inform how you use it and what you expect to get out of it. When what you want is an immediately available sounding board that can reply to something you can describe in a relatively brief conversation, many LLMs will have been trained to give good results. That’s probably also true for therapy-adjacent tasks like giving summaries or general recommendations for coping skills, or maybe even prompting for ideas about ways to handle a conversation or situation in a different way someone else has already thought of but that you haven’t been exposed to yet. The LLM’s helpfulness, performance and knowledge will tend to drop off as you get more into specifics that you share with relatively small groups of people, or as those issues take on a wider time scale in your life to where you’d have a hard time describing all the intersecting aspects of what you’re dealing with even over many hours of conversation.

If you do want to try to work on rare, more complex, longer running issues with an LLM’s help, you might do better by offsetting some of its weaknesses with your own strengths. If you know in advance that what it’s been fed may to it not be very representative of the connections and time scale between interconnected issues in your life, it may work better to pick one more specific topic at a time and then do some reflection in between LLM chats to figure out for yourself how the pieces connect. Rely on your own gut feelings and be in touch with our own uncertainties about social situations you might be bringing to an LLM chat for help.

Conclusion

I’ll be lucky if most of this is still relevant a year from now. A few aspects that I don’t expect to change are:

- Usefulness of local models as a way of preserving privacy.

- Training data representativeness of conversations that help deeper or more personal issues.

- Therapy-like use of LLMs being common among people that have had bad experiences or just not been helped by talk therapy, while growing among everyone else.

- Ongoing tension between therapists who feel pressured one way or another by the existence of these tools, and attempts by the tech industry to reshape or even displace therapists’ work, sometimes to the benefit of people lacking access and sometimes not.

Please shoot me an email if I’ve missed an important point, gotten anything significantly wrong, or if you have other thoughts or comments: mailto:aaron@aaronaltmantherapy.com. I am also, of course, available for help with this in the form of ongoing therapy if that’s something you want.